Why AI Can Make Software Development Cheaper, But Not by as Much as You Think

The productivity gains are real. So is the hype.

April 1, 2026 by Yuriy Frankiv · 7 min read

April 1, 2026 by Yuriy Frankiv · 7 min read

I needed a CRM. Not just any CRM. I wanted one that could actually work the way I work. I tried several existing tools. They either missed features I cared about, forced me into workflows that didn't fit, or wanted a monthly fee that didn't make sense for a one-person consultancy. So I did what any developer would do: I decided to build my own.

But this time, I had a plan. I was going to build it with AI and use the project as a clean experiment to measure how much more productive AI actually makes me. This is not really a story about the CRM, though. It is a story about what happened when I built it using Claude Code, and what I did not expect along the way.

What I Wanted to Build

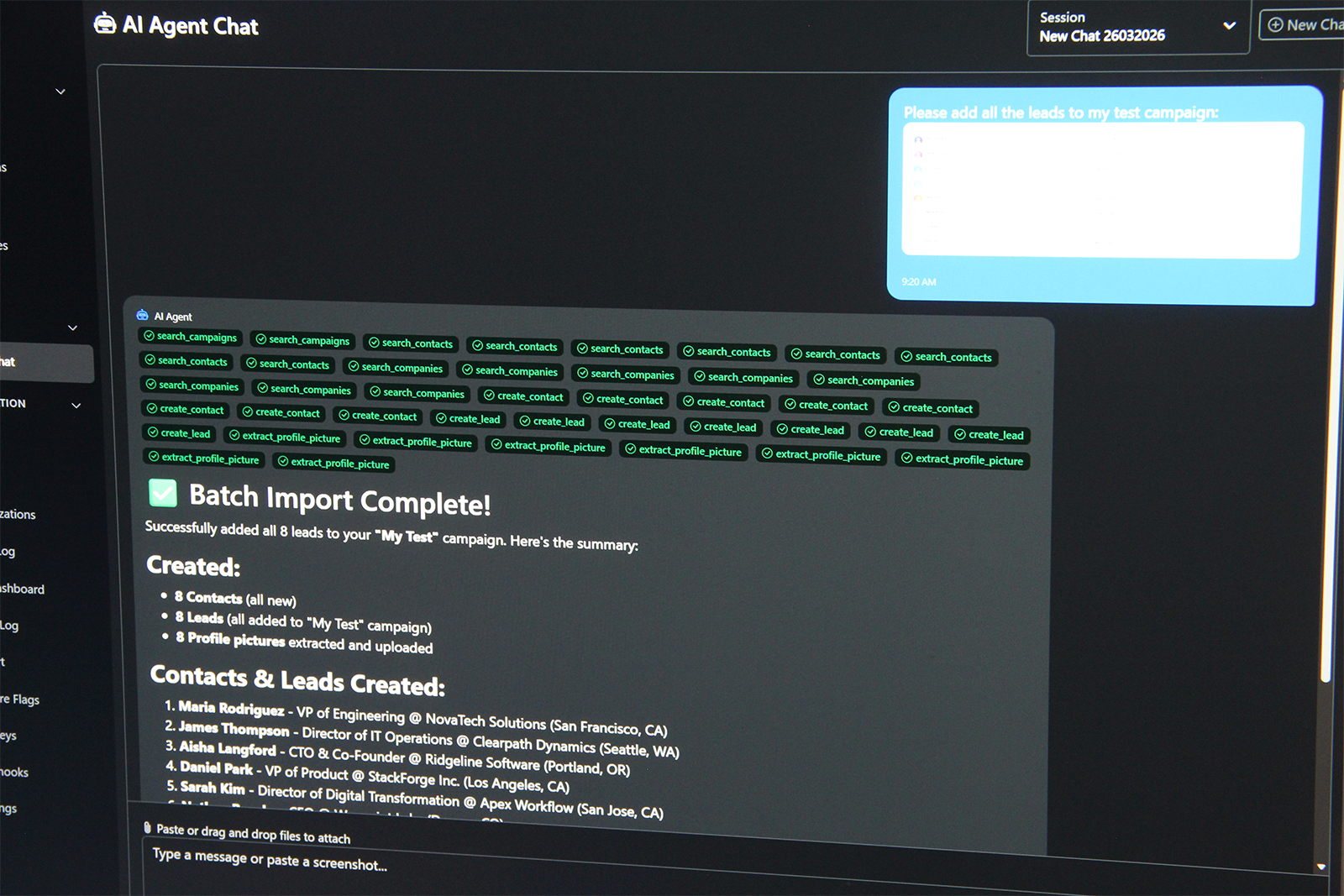

The goal wasn't just another contacts list. I wanted something genuinely smart: drop in a batch of emails, screenshots of LinkedIn profiles, photos of business cards, and have the system process all of it, extract the relevant data, organize it in the database, and draft personalized follow-up messages. An AI-powered CRM, built with AI assistance.

Before I wrote a single line of code, I sat down with Claude and worked through the full plan: architecture, feature specs, prompts for Claude Code.

The First Two Weeks Were Great

And honestly, they were. I had working features faster than I expected. The CRM was running, I was using it for real client work, and it was already saving me time. The initial impression you get from tools like Claude Code is hard to overstate. It genuinely feels like: I can build whatever I want in days.

That impression is not entirely wrong. You can build things fast, if you plan carefully and review what's being generated.

That second part is where I made my mistake.

Then the Velocity Dropped

In the excitement of getting something working, I was barely reading the code coming out of the agent. I trusted my initial instructions and let it run. For a couple of weeks, that worked well enough. Then it stopped working well.

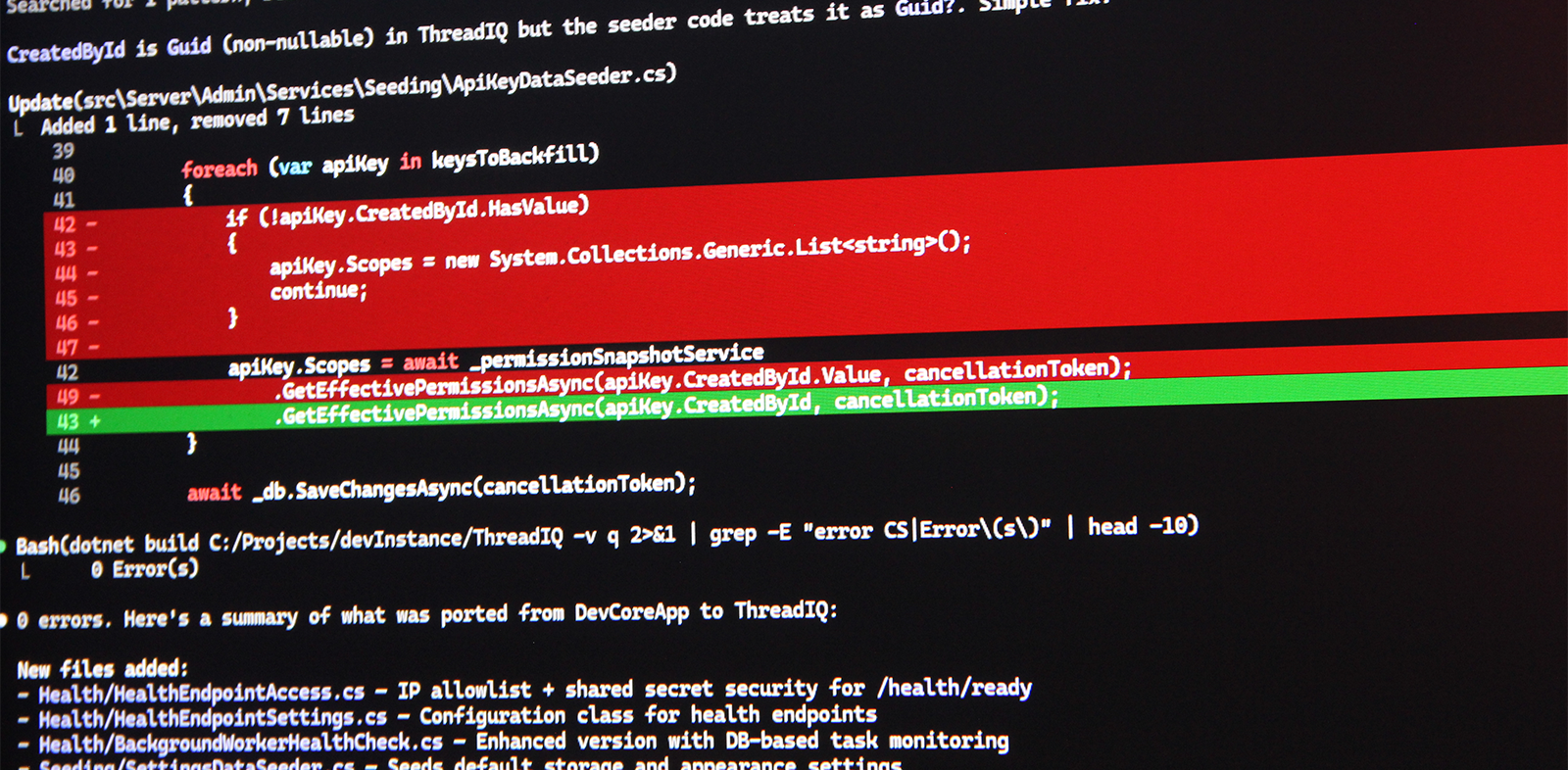

Crashes started showing up. Small bugs, inconsistencies in behavior, edge cases that weren't handled. The kind of technical debt that builds quietly until it doesn't.

I noticed something else too: long coding sessions seemed to dilute the agent's memory of the original spec. After enough compacting cycles, it started making decisions that didn't match what I had written. The instructions were there, but the signal had gotten weaker.

When I finally sat down and read through the codebase properly, I found what I expected to find after "just make it work" development: imperfect solutions in a lot of places, multithreading issues, async operations that weren't handled right, broken contracts between components. The kind of stuff a senior developer catches during code review, not after the fact.

If I had been reviewing the output as the agent worked, treating it like I was the tech lead on a team of one, I would have caught most of that before it compounded into a mess.

The Fundamental Problem Nobody Talks About

There's a habit in AI hype cycles of treating the rough edges as implementation details that will get smoothed out. Better models, better context windows, better tooling. But I think there's something more fundamental going on.

A coding agent doesn't reason the way a developer does. It generates code based on patterns from enormous amounts of training data. That's a genuinely useful capability. But it is not the same thing as understanding a system, thinking through implications, or caring whether the code is good.

That gap shows up in the work. And you only see it if you're reading the code.

I also want to name the thing nobody says out loud: while the agent is coding, you have to wait for it. If you want to review what's being generated (and you should), that creates a forcing function that limits how much you can parallelize. You are not freed from the work. You are redirected within it.

Is My Stack Part of It?

Maybe. I work in C#, ASP.NET Core, and Blazor. There's arguably more training data for JavaScript and React, and the agent behavior might be meaningfully better in that context. I don't know for certain.

But I don't think the technology stack is the whole explanation. The deeper issue is that AI-assisted coding is often described as nearly solved, and it isn't. Not for complex systems. Not yet.

So What's the Honest Assessment?

AI makes software development somewhat faster and somewhat cheaper. Not 10x. Probably not 3x in most real-world projects. The gains are real but they are not magic.

Where it genuinely helps:

- Iteration speed on smaller, well-scoped features

- Handling boilerplate: forms, validation logic, CRUD scaffolding

- Documentation, UI layout, and the kind of work you need done but don't want to think hard about

- Faster feedback cycles with clients because you can show something sooner

Where the limits show up:

- Complex systems where architectural decisions compound over time

- Code that requires real understanding of state, concurrency, and system contracts

- Long projects where accumulated low-quality output creates drag that slows everything down

- Anywhere the developer stops reading what the agent produces

Some software products can genuinely be completed with vibe coding. Simple tools, internal utilities, MVPs that don't need to scale. But enterprise-grade ERP systems, products that need to be maintained for years, applications with real performance and reliability requirements. Those still need experienced developers making real decisions.

The business implication is interesting, though. More companies can now afford to build something tailored to their needs rather than buying a generic SaaS product. That will put pressure on some vendors in the mid-market. But it won't eliminate them, and it won't replace the developers who know what they're doing.

Where I Landed

My CRM works. I use it every day. The AI assistance genuinely helped me build it faster than I would have otherwise, and the AI-powered features inside it, the lead import from images and emails, are legitimately useful.

But the development process was not the smooth, frictionless experience the demos suggest. It required real engineering judgment, real code review, and a real willingness to fix things when the agent got it wrong.

AI-assisted development is already here and it is already helping. It is also significantly overhyped. Those two things can both be true at the same time, and I think being honest about both is more useful than picking a side.

That said, I am not done experimenting. I am currently working on a much more ambitious project, and this time I am going in with everything I learned from the CRM. Better structure upfront, tighter review cycles, and a clearer sense of where the agent needs a short leash and where it can run. If the approach I have in mind works the way I think it will, I am genuinely hoping to push well past what I thought was possible. I will write about it when I have real results to share.

Read Next

The Weeks-Not-Months Problem: What Business Leaders Get Wrong About Modernizing Old Software

Most modernization projects stall because leadership and technology teams mean different things by 'modernize.' This article breaks down the gap between digital modernization and software modernization, and what actually makes the difference.

Read more...

End of SaaS? How AI Re-Shaped Software Development Last Year and How It Affects SMB

AI didn't kill SaaS overnight, but it fundamentally changed how software gets built, priced, and delivered. Here's what happened in 2025 and what it means for SMBs navigating the shift.

Read more...